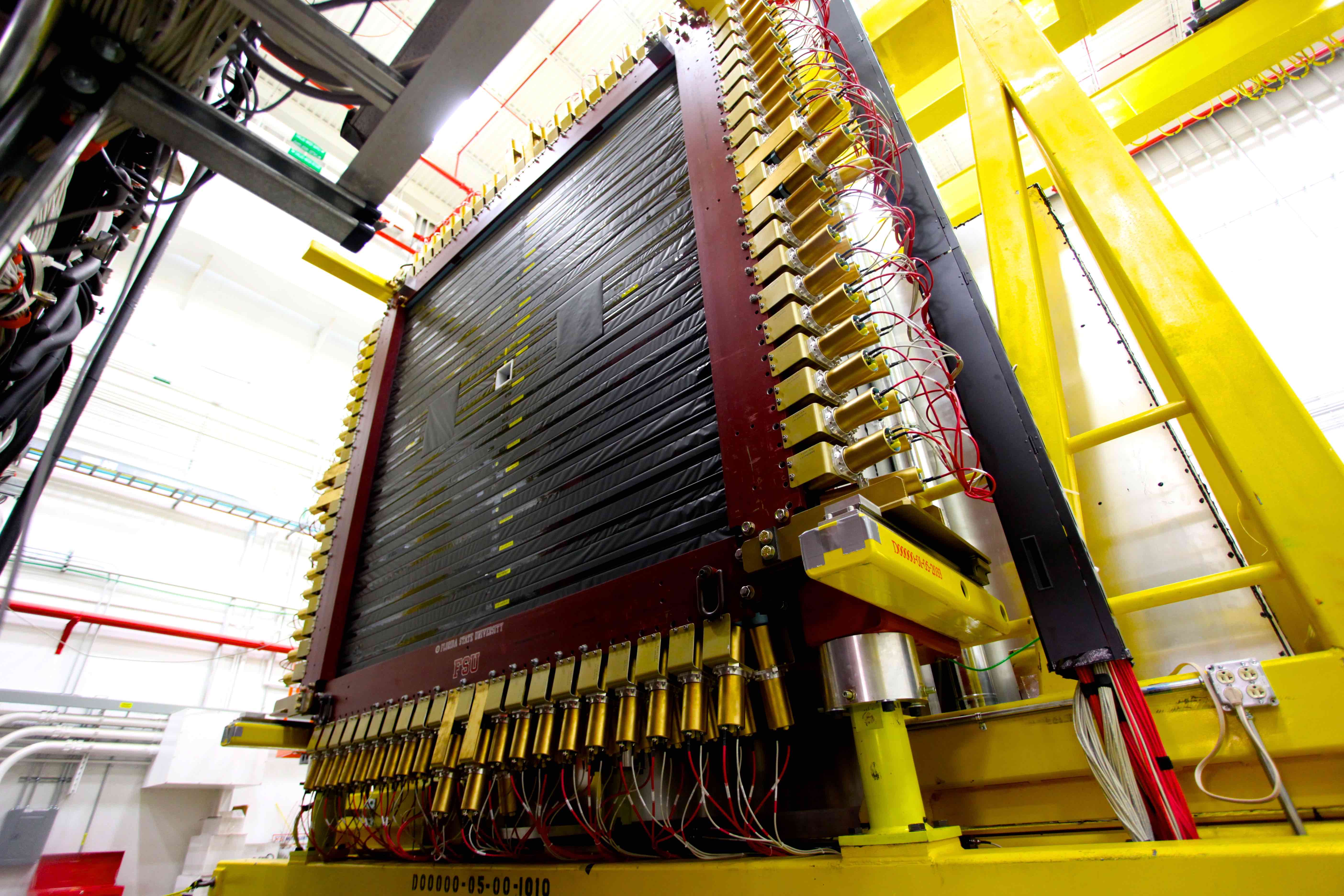

The time-of-flight detector is part of the GlueX detector system in Jefferson Lab's Experimental Hall D.

Preparing for datasets beyond the petascale

The GlueX apparatus at the Department of Energy’s Thomas Jefferson National Accelerator Facility produces more than a petabyte (one million gigabytes) of data every year it runs, and that number is expected to double or triple in the near future. From their birthplace in the detector to their final state as stored data, each bit of information in these large datasets is passed through a pipeline of hardware and software that eventually funnels into the JLab Analysis Framework, called JANA, for processing. Now, JANA is getting an overhaul.

“Detectors will eventually read out exabytes of information instead of petabytes,” said Jefferson Lab Staff Scientist David Lawrence. He is part of the Gluonic Excitations collaboration, a group of nuclear physicists who are using GlueX to probe the nucleus of the atom for clues about the strong force that holds the nucleus together. Lawrence and his team are updating the JANA framework in a project funded by the 2019 Laboratory Directed Research and Development (LDRD) program.

The update will maximize JANA’s parallel-processing power, while keeping it user friendly and flexible enough to be used with a variety of detectors and supercomputing centers. Originally written starting in 2005, JANA has been in use with GlueX from day one, and it could be useful for future experiments at an Electron-Ion Collider or other projects that require handling a high volume of data.

“Moving to a newer framework that is more modern will better position us to accommodate the higher data volumes that we know are going to come along,” he said.

JANA is a powerful library of software tools designed for high-energy physics data processing. It was written to take advantage of multi-core hardware. As supercomputing centers become more powerful, they have not only hundreds of computers, or nodes, working in parallel to process data, but they also use computers with multiple cores inside each machine. Multi-threaded software is written specifically to take advantage of the processing power of multi-core machines.

Nathan Brei, a computer scientist who has been hired to work on the project, says that the updated JANA (JANA2) will provide nuclear physicists the ability to process data using cutting-edge algorithms without being an expert in writing parallel code.

“As long as physicists use JANA as designed, they won't have to worry about the subtleties of parallel programming,” Brei explained. “By organizing different processing steps into separate components, they won't have to worry about interfering with each other's work, either. Rather, they each contribute their own little jigsaw pieces and let JANA assemble the puzzle.”

Real-Time Data Processing

Data from the GlueX detector pours out at a rate of about 1 GB per second. The raw data for each individual particle collision event is about 12 kB in size, and hundreds of billions of events may be produced in a single experiment. But due to memory constraints, not all of the data can be kept. Making a decision about what to keep without seeing exactly what is in the data is a guessing game physicists would prefer not to play.

Currently, event data is sifted by the data acquisition system, where a trigger denotes which bits contain events that will be archived, unpacked and reconstructed later, further down the processing pipeline, where the JANA framework may be used.

One goal of the LDRD project is for JANA2 to eventually support data processing in real-time, so that programmers can write “software triggers” that sift through the petabytes of data, using ever more sophisticated algorithms, while it is still streaming out of the detector to pick the events that will be archived for further analysis later.

Another goal of Brei's work is to have the framework report how well it is performing, even at the individual event level. Using this additional feedback, users can adjust various 'knobs' to tune the program. For instance, they can adjust memory usage or lower the energy footprint of the processors. They can also see exactly where the bottlenecks in the code are happening, so they know which parts would benefit from further optimization.

Modernizing JANA

Brei is also rewriting JANA to use modern C++ tools and techniques. Advances in the C++ language allow programmers to handle memory in a safer way, which makes the code more reliable and sometimes easier to understand. This makes it easier for users who write custom plugins. Keeping JANA2 simple opens the door for integrating it with more complex hardware, for example, communicating with other machines on the network or for machine learning on a GPU.

“You can assign a task to all these threads, whether it’s a disentangling task or a tracking reconstruction task, or just a full event reconstruction task, and have JANA2 do it,” Lawrence said. “You fully are optimized on the hardware that you’re running on, and it makes it a lot easier for the user to implement.”

By Amelia Jaycen

Further Reading

Proposal: Development of Next Generation Parallel Event Processing Framework

Contact: Kandice Carter, Jefferson Lab Communications Office, 757-269-7263, kcarter@jlab.org