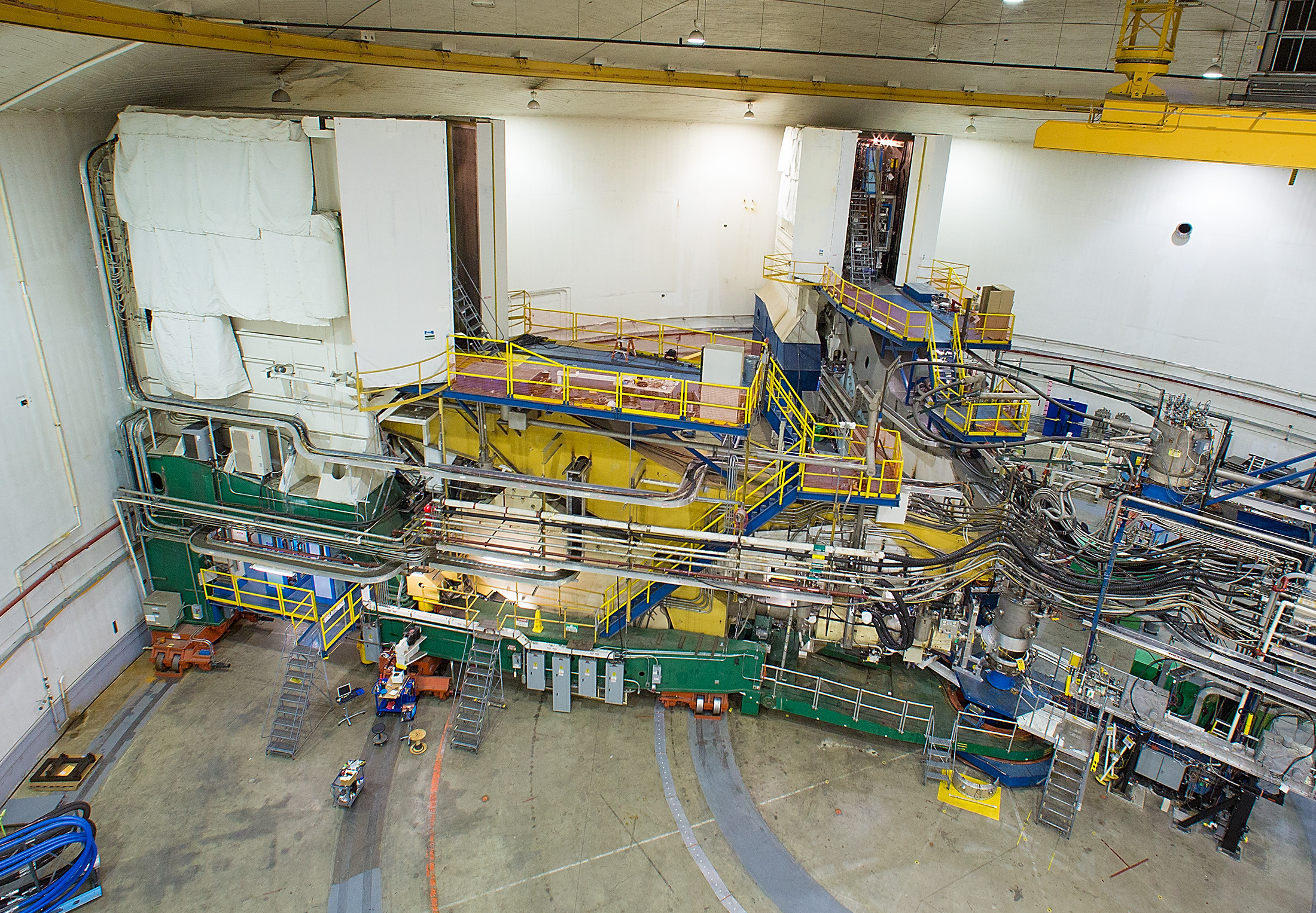

The team aims to construct a Universal Monte Carlo Event Generator, or UMCEG, as part of a multi-year demonstration project funded by the Laboratory Directed Research & Development program. For this, they are using machine learning, a technique often applied to image analysis. The UMCEG will be able to maximally capture the physics information contained in the entire sample of events collected by an experiment, such as those conducted in the lab's Experimental Hall A, shown here.

Ongoing project seeks to determine whether machine learning can save time and research dollars

NEWPORT NEWS, VA – Imagine having a telescope with the ability to spot what’s going on in a few square inches on the surface of Pluto while stationed here on Earth. That’s analogous to what nuclear physicists do in every experiment that they run, only they use microscopes called particle accelerators that they point toward the heart of matter. These accelerators send particles deep inside matter to probe the makeup of neutrons, protons and other subatomic particles that are the universe’s building blocks.

There are few particle accelerators powerful enough to do this research, so experiment time is extremely limited. Now, nuclear physicists at the Department of Energy’s Thomas Jefferson National Accelerator Facility are turning to machine learning to help ensure that they make the most of every moment.

Predicting Future Events

The interactions of accelerated particles and subatomic particles happen at distances of mere femtometers, a tiny unit of measure that’s equivalent to a millionth of a billionth of a meter. While the detectors that capture the results of these interactions are meters away, scientists can use that information to study the aftermath of these interactions to figure out what happened at the femtoscale.

To reconstruct what happened at the subatomic level, scientists often work backward, starting with what is captured by an array of detectors. But some of the particles don’t last long enough to make the trek from the point of impact to a detector, and those that do may be distorted by the mechanics of the detectors. Also, the particles may interact as they travel, scrambling what is seen even more.

To help make sense of what may be a welter of complicated particle interaction data, experimental scientists have long used what is called a Monte Carlo event generator.

“The event generator mimics, very well to some extent, how experimentalists see the particles in the detector. It’s very useful, because it helps experimentalists calibrate their detectors,” said Nobuo Sato, a co-principal investigator on the project and the Nathan Isgur fellow in Jefferson Lab’s Center for Theoretical and Computational Physics.

However, the results calculated by a conventional Monte Carlo event generator depend on theoretical models and assumptions that guide the software as it works. If the models and assumptions are wrong or can’t handle a given situation, then standard event generators don’t work well.

According to Wally Melnitchouk, a senior staff scientist in Jefferson Lab’s Center for Theoretical and Computational Physics and the project’s co-principal investigator, a case in point can be seen in color entanglement. Quarks, which make up neutrons and protons, come in red, green and blue. Describing what happens when these colored quarks interact, or become entangled in quantum terminology, is easily dealt with by existing theory when particle energy is high. That is not true at lower energies.

“At present we don’t know exactly how to describe that theoretically,” said Melnitchouk.

From Experiment to Theory

Since the pioneering work of Feynman in the 1970s, there has been significant theoretical research devoted to theory-based Monte Carlo event generators. These event generators are now routinely used by experimentalists to study high-energy particle collisions.

However, the applicability of the existing theoretical framework is limited to high energies, making the use of such generators problematic at lower energies, such as those present in experiments conducted at Jefferson Lab.

This has led the Jefferson Lab team to consider another possible approach: construct an event generator that is agnostic about the theoretical interpretations at the femtometer scale physics. Doing so would free the event generator of the shortcomings and constraints of the current understanding of the fundamentals of nature.

“It becomes this universal event generator, as distinguished from something that is based on approximations of a theory,” Sato said.

The Jefferson Lab duo aim to construct a Universal Monte Carlo Event Generator, or UMCEG, as part of a multi-year demonstration project funded by the Laboratory Directed Research & Development program. For this, they are using machine learning, a technique often applied to image analysis.

Defining the Cat

Showing a machine learning system many pictures and depictions of cats and non-cats enables the software to learn, through error correction and feature extraction, which images depict cats and which do not. This happens even though the software has no baseline theory as to what a cat looks like. To do this, the software needs a lot of images of as wide a variety of cats as possible.

In an analogous approach, the UMCEG will start with data that show the spectrum of particles that have been produced in real experiments from specific particle interactions at a range of energies. The software will use this information to map out the distribution of this spectrum of particles, free of theoretical interpretation, based on what has been seen in the past.

Experiments of Future Past

So, as theories advance and predict some new outcome, scientists using the UMCEG can see if this new outcome, or observable particles that indicate this outcome, was indeed there in the original experiment in the spectrum of produced particles.

“Some of the information, you don’t yet know how to interpret,” Melnitchouk said. “You may not even know that an observable is there in the data until later.”

Melnitchouk says this is an improvement over the current practice of re-analyzing old data, because the UMCEG will be able to maximally capture the physics information contained in the entire sample of events collected by the experiment.

The Ultimate UMCEG for Real Experiments

In the second phase of the project, the UMCEG will be integrated with detector simulators, which will train it to recognize the data that is captured by detectors and correlate it with the extracted information about the spectrum of particles.

In this detector training phase, the software will make forecasts and compare them to what comes out of an actual experiment. Any differences will be identified by an artificial neural network and sent back as error corrections. This process will repeat until the differences between the UMCEG output and actual results become small.

In this effort, the Jefferson Lab team is working with machine learning researchers Michelle Kuchera from Davidson College and Yaohang Li from Old Dominion University. The initial goal is to produce proof-of-principle demonstration software that shows the power of the concept, with a follow-on project then fleshing out this demonstrator and making it more widely useful.

When the project is complete, scientists hope to be able to take actual detector data from experiments performed at Jefferson Lab with the Continuous Electron Beam Accelerator Facility, and use machine learning to optimally train the UMCEG to capture the full spectrum of particles produced in real experiments. As a result, the software will be able to capture all information produced by these experiments and make it available for current and future generations of researchers.

“It’s a way of maximally preserving the results of the experiment. You don’t have to repeat the experiment in the future,” Melnitchouk said.

By Hank Hogan

Further Reading

Proposal: Universal Monte Carlo Event Generator